读书笔记-Autonomous Intelligent Vehicles(一)

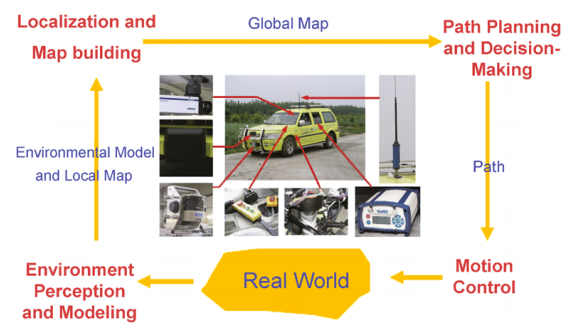

Autonomous intelligent vehicles have to finish the basic procedures:

- perceiving and modeling environment

- localizing and building maps

- planning paths and making decisions

- controlling the vehicles within limit time for real-time purposes.

Meanwhile, we face the challenge of processing large amounts of data from multi-sensors, such as cameras, lidars, radars.

Our goal in writing this book is threefold:

- First, it creates an updated reference book of intelligent vehicles.

- Second, this book not only presents object/obstacle detection and recognition, but also introduces vehicle lateral and longitudinal control algorithms, which benefits the readers keen to learn broadly about intelligent vehicles.

- Finally, we put emphasis on high-level concepts, and at the same time provide the low-level details of implementation.

We try to link theory, algorithms, and implementation to promote intelligent vehicle research.

This book is divided into four parts.

- The first part Autonomous Intelligent Vehicles presents the research motivation and purposes, the state-of-art of intelligent vehicles research. Also, we introduce the framework of intelligent vehicles.

- The second part Environment Perception and Modeling which includes Road detection and tracking, Vehicle detection and tracking, Multiple-sensor based multiple-object tracking introduces environment perception and modeling.

- The third part Vehicle Localization and Navigation which includes An integrated DGPS/IMU positioning approach, Vehicle navigation using global views presents vehicle navigation based on integrated GPS and INS.

- The fourth part Advanced Vehicle Motion Control introduces vehicle lateral and longitudinal motion control.

The Key Technologies of Intelligent Vehicles:

- Multi-sensor Fusion Based Environment Perception and Modeling

- Vehicle Localization and Map Building

- Path Planning and Decision-Making

- Low-Level Motion Control

基于环境认知与建模的多维传感器数据融合

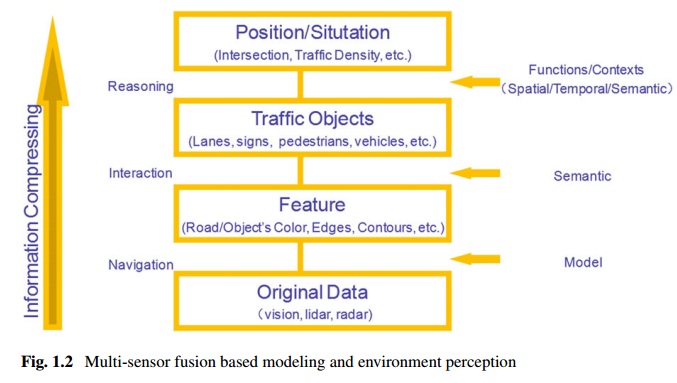

Figure 1.2 illustrates a general environment perception and modeling framework. From this framework, we can see that:

- (i) The original data are collected by various sensors;

- (ii) Various features are extracted from the original data, such as road (object) colors, lane edges, building contours;

- (iii) Semantic objects are recognized using classifiers, and consist of lanes, signs, vehicles, pedestrians;

- (iv) We can deduce driving contexts, and vehicle positions.

Multi-sensor fusion

Multi-sensor fusion is the basic framework of intelligent vehicles for better sensing surrounding environment structures, and detecting objects/obstacles. Roughly, the sensors used for surrounding environment perception are divided into two categories: active and passive ones. Active sensors include lidar, radar, ultrasonic and radio, while the commonly-used passive sensors are infrared and visual cameras. Different sensors are capable of providing different detection precision and range, and yielding different effects on environment. That is, combining various sensors could cover not only short-range but also long-range objects/obstacles, and also work in various weather conditions. Furthermore, the original data of different sensors can be fused in low-level fusion, high-level fusion, and hybrid fusion.Dynamic Environment Modeling

Dynamic environment modeling based on moving on-vehicle cameras plays an important role in intelligent vehicles [17]. However, this is extremely challenging due to the combined effects of ego-motion, blur, light changing. Therefore, traditional methods for gradual illumination change, small motion objects, such as background subtraction, do not work well any more, even those that have been widely used in surveillance applications. Consequently, more and more approaches try to handle these issues [2, 17]. Unfortunately, it is still an open problem to reliably model and update background. To select different driving strategies, several broad scenarios are usually considered in path planning and decision-making, when navigating roads, intersections, parking lots, jammed intersections. Hence, scenario estimators are helpful for further decision-making, which is commonly used in the Urban Challenge.Object Detection and Tracking

In general, in a driving environment, we are interested in static/dynamic obstacles, lane markings, traffic signs, vehicles, and pedestrians. Correspondingly, object detection and tracking are the key parts of environment perception and modeling.

通过多维传感器数据融合,有效实现对短距离、长距离的物体/障碍物的识别、跟踪,从而达到对环境的建模。可以看出,计算机视觉仍然是动态环境建模的挑战。

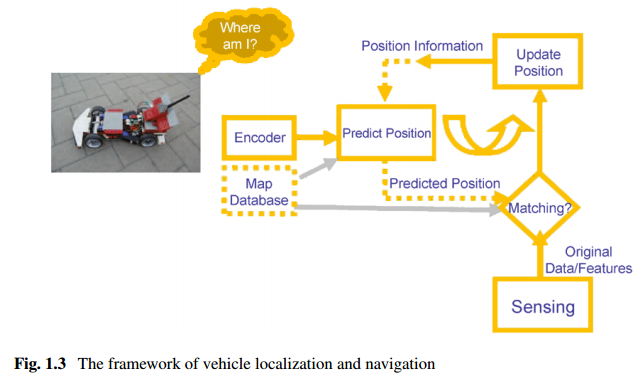

高精度定位和地图的构建

The goal of vehicle localization and map building is to generate a global map by combining the environment model, a local map and global information.

For vehicle localization, we face several challenges as follows:

- (i) Usually, the absolute positions from GPS/DGPS and its variants are insufficient due to signal transmission;

- (ii) The path planning and decision-making module needs more than just the vehicle absolute position as input;

- (iii) Sensor noises greatly affect the accuracy of vehicle localization.

Regarding the first issue, though the GPS and its variants have been widely used in vehicle localization, its performance could degrade due to signal blockages and reflections of buildings and trees. In the worst case, Inertia Navigation System (INS) can maintain a position solution.

As for the second issue, local maps fusing laser, radar, and vision data with vehicle states are used to locate and track both static/dynamic obstacles and lanes. Furthermore, global maps could contain lane geometric information, lane makings, step signs, parking lots, check points and provide global environment information.

Referring to the third issue, various noise modules are considered to reduce localization error.

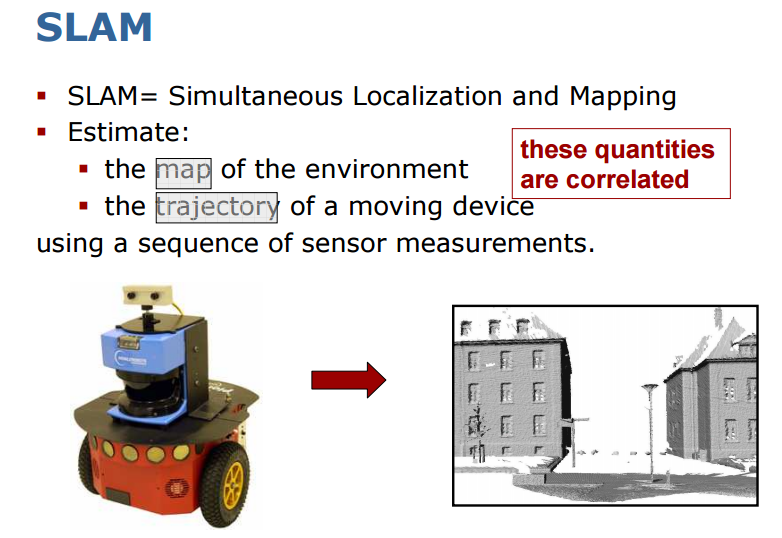

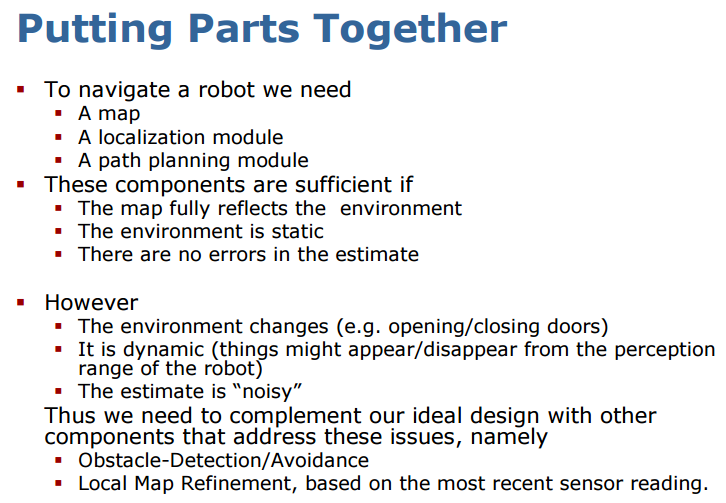

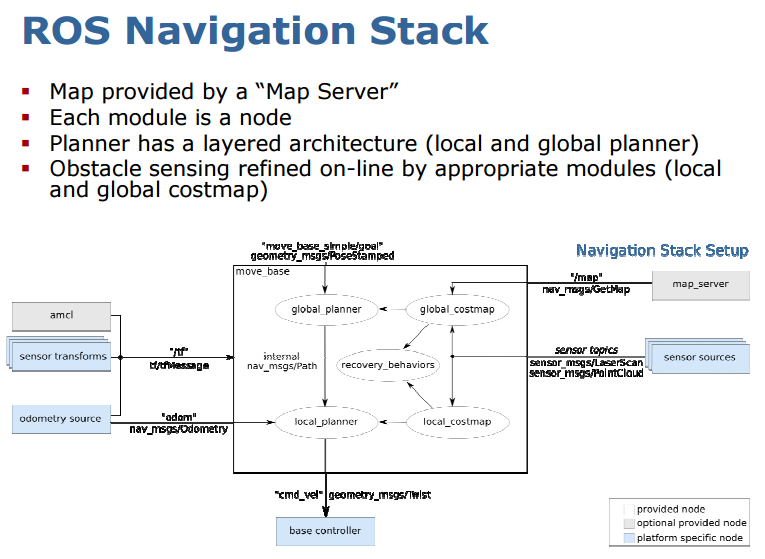

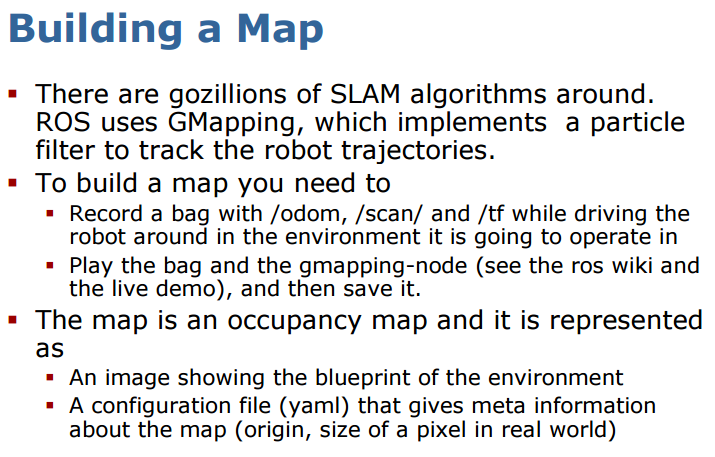

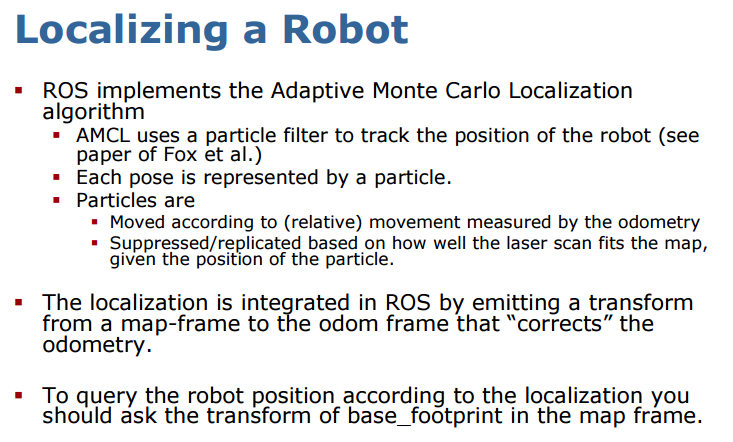

SLAM是目前研究的比较多的机器人定位和地图构建的算法。下面是结合ROS和SLAM的一些展示:

路径规划和决策制定

Global path planning is to find the fastest and safest way to get from the initial position to the goal position, while local path planning is to avoid obstacles for safe navigation.

Road following, making lane-changes, parking, obstacle avoidance, recovering from abnormal conditions. In many cases, decision-making depends of context driving, especially in driver assistance systems.

目前在高德、百度中用到路径规划算法是否可以通用呢?

低层运动控制

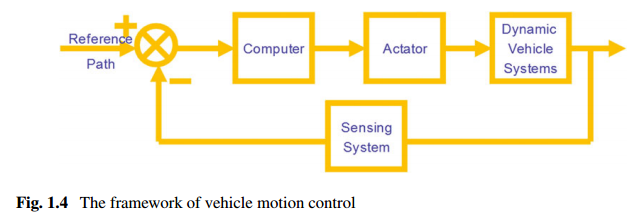

Its typical applications consist of automatic vehicle following/platoon, Adaptive Cruise Control (ACC), lane following. Vehicle control can be broadly divided into two categories: lateral control and longitudinal control(Fig. 1.4). The longitudinal control is related to distance–velocity control between vehicles for safety and comfort purposes. Here some assumptions are made about the state of vehicles and the parameters of models, such as in the PATH project. The lateral control isto maintain the vehicle’s position in the lane center, and it can be used for vehicle guidance assistance. Moreover, it is well known that the lateral and longitudinal dynamics of a vehicle are coupled in a combined lateral and longitudinal control, where the coupling degree is a function of the tire and vehicle parameters. In general, there are two different approaches to design vehicle controllers. One way to do this is to mimic driver operations, and the other is based on vehicle dynamic models and control strategies.

从目前业界动态来看,国内做自动驾驶、无人驾驶创业的厂商大多从ADAS切入,有市场的原因,比如目前普通车主能接受的汽车更加安全、智能,但还没到自动驾驶的程度;有技术的原因,移动设备的数据处理能力以及算法的实时性依然有待提升。如上所述,类似ADAS的运动控制可以分为横向和纵向的控制,横向运动控制主要是使车辆保持在道路中间,如车道保持系统;纵向运动控制基于距离和速度,是行驶安全性、舒适性的关键,自适应巡航、防碰撞预警系统等控制都属于纵向运动控制。